Most tokenization platform guides stop at the surface: “you need smart contracts, a dashboard, and KYC.” That tells an engineering team nothing about how to actually build one.

This article goes deeper. We break down the architecture of a production tokenization platform into its six core subsystems, explain the design decisions at each layer, show how they integrate, and highlight the tradeoffs that separate a demo from an institutional-grade system. This is the architecture we have refined across multiple production deployments – including platforms handling regulated securities, real estate fractions, and agricultural investment instruments.

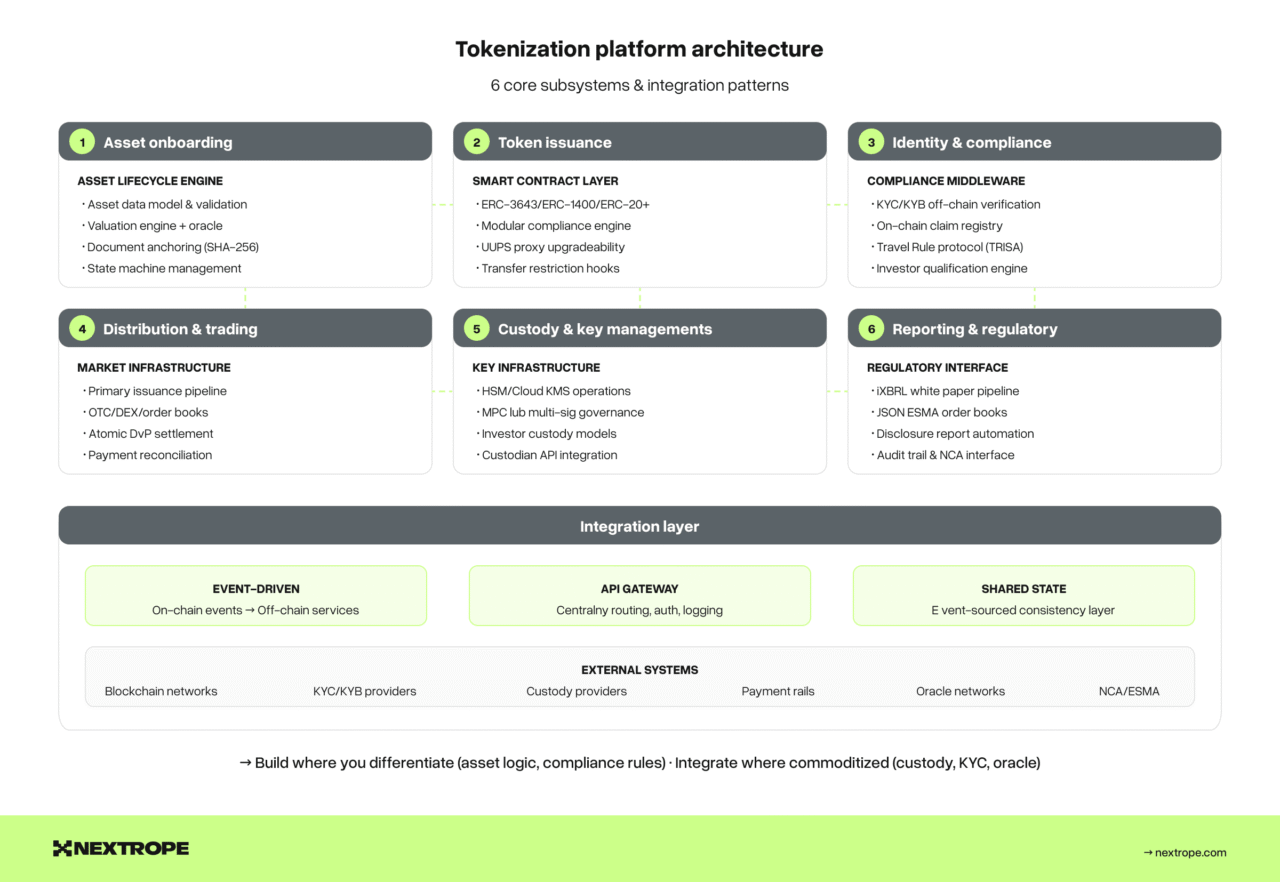

The six subsystems

A production tokenization platform is not a single application. It is a coordinated system of six subsystems, each with its own data model, failure modes, and scaling characteristics:

- Asset onboarding & lifecycle Engine – ingestion, validation, valuation, and state management of underlying assets.

- Token issuance & smart contract layer – on-chain token logic, compliance enforcement, and standard selection.

- Identity & compliance middleware – KYC/KYB verification, investor accreditation, sanctions screening, and Travel Rule compliance.

- Distribution & trading infrastructure – primary issuance flows, secondary market integration, and settlement.

- Custody & key management – private key infrastructure, multi-sig governance, and custodian integration.

- Reporting & regulatory interface – white paper management, disclosure automation, audit trails, and NCA communication.

Each subsystem can be built in-house, integrated from a vendor, or composed from open-source components. The architecture decision is not “build vs buy” globally – it is “build vs buy” at each subsystem, based on where your competitive advantage lies.

Let’s examine each one.

1. Asset onboarding & lifecycle engine

Before a single token is minted, the underlying asset must be validated, structured, and prepared for tokenization. This subsystem is often underengineered – teams rush to smart contracts and discover later that their asset data model cannot support the operations they need.

Asset data model

Your asset data model must capture the full lifecycle state of the underlying asset, not just a static description. For a real estate tokenization, this means:

- Legal structure metadata: SPV entity, jurisdiction, ownership chain, encumbrances.

- Valuation data: Initial appraisal, revaluation schedule, NAV calculation methodology, third-party valuation provider integration.

- Document repository: Title deeds, legal opinions, insurance policies, audit reports — all versioned and hash-anchored to the blockchain for tamper evidence.

- Lifecycle state machine: Draft → Under Review → Approved → Active → Distributing → Redeemed → Archived. Each state transition triggers downstream actions (token minting, distribution enabling, redemption activation).

The critical design decision is whether your asset model is generic (supporting multiple asset classes through configurable schemas) or specialized (hardcoded for a single asset class). Generic models are more complex to build but essential if your platform serves multiple asset types. Specialized models ship faster but create technical debt when you expand.

Valuation engine

For asset-backed tokens, the on-chain token price must reflect off-chain asset value. This requires a valuation pipeline:

- Data ingestion: Pull valuations from third-party providers (appraisal firms, pricing feeds, NAV calculators) via API or manual upload with multi-signature approval.

- Staleness detection: If a valuation is older than a defined threshold, flag the asset and optionally pause new issuance. Stale valuations are a regulatory risk and an investor protection issue.

- Oracle publication: Publish validated valuations on-chain via an oracle contract. This can be a Chainlink-style decentralized oracle for public chains, or a permissioned oracle controlled by designated valuation agents for private/consortium chains.

The oracle design is a significant architectural choice. Push oracles (issuer publishes on schedule) are simpler but introduce latency. Pull oracles (consumers request on-demand) are more responsive but more complex and expensive in gas.

Document anchoring

Every material document associated with the asset should have its hash stored on-chain. This does not mean storing documents on-chain – that is prohibitively expensive and unnecessary. Instead:

- Store the document in an off-chain document management system (IPFS, cloud storage, or dedicated document vault).

- Compute a SHA-256 hash of the document.

- Store the hash on-chain in a DocumentRegistry contract, associated with the asset ID and timestamp.

- Any party can independently verify document integrity by hashing the off-chain document and comparing it to the on-chain record.

This pattern provides tamper evidence without on-chain storage costs, and is increasingly expected by regulators as part of transparency requirements.

2. Token issuance & smart contract layer

This is the subsystem that gets the most attention, but it is only one-sixth of the platform. The key decisions here are standard selection, compliance hook architecture, and upgradeability strategy.

Standard selection

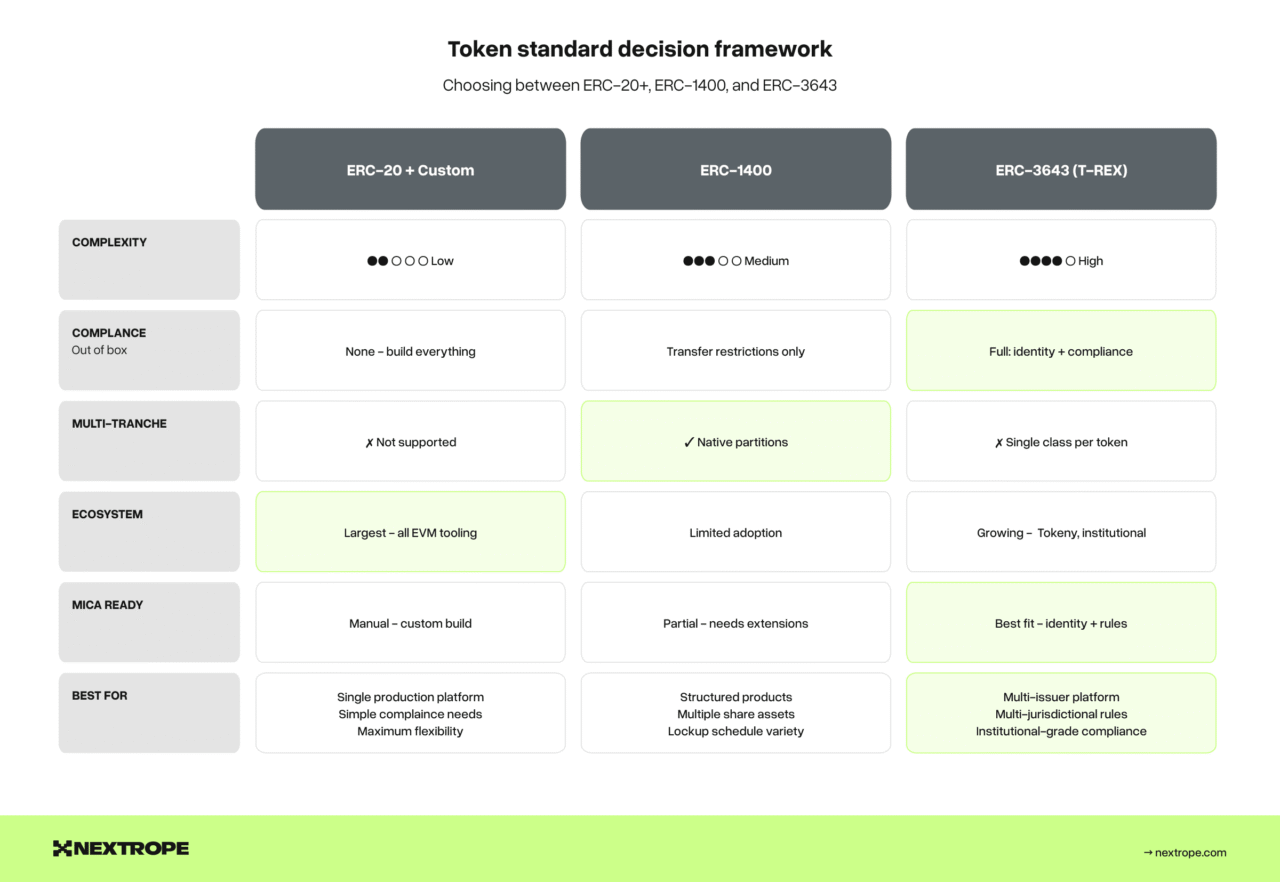

The three dominant standards for tokenized securities and regulated assets are:

ERC-20 with custom extensions – the simplest approach. You take the basic fungible token standard and add transfer restriction hooks (beforeTokenTransfer modifiers). Pros: maximum flexibility, minimal external dependencies. Cons: you must build all compliance logic yourself, no ecosystem tooling, and auditors must review custom code rather than a known standard.

ERC-1400 – the security token standard with built-in partition support. Tokens can be divided into tranches (partitions) with different transfer rules per tranche. Useful for structured products with multiple share classes, or for separating locked vs. freely transferable tokens. Pros: native tranche support, partial fungibility. Cons: less ecosystem adoption than ERC-3643, more complex token model.

ERC-3643 (T-REX) – the institutional-grade standard with integrated identity and compliance registries. Tokens are bound to an on-chain identity system (ONCHAINID) and every transfer is validated against a modular compliance framework. Pros: richest compliance out of the box, growing institutional adoption, Tokeny ecosystem. Cons: heavier dependency footprint, ONCHAINID integration required, potentially over-engineered for simple use cases.

For a detailed comparison of ERC-1400 and ERC-3643 in the context of MiCA compliance, see our ERC-3643 vs ERC-1400 analysis.

The decision framework: if you are building a single-product platform with simple compliance needs, start with ERC-20 + custom hooks. If you need multi-tranche support (multiple share classes, lockup schedules), consider ERC-1400. If you need institutional-grade compliance with multi-jurisdictional rules and plan to serve multiple issuers, ERC-3643 provides the most complete foundation.

Compliance hook achitecture

Regardless of the token standard, every transfer must pass through a compliance check. The architectural pattern that scales is a modular compliance engine – a contract (or set of contracts) that evaluates transfer eligibility based on composable rules:

- Identity check: Both sender and receiver have valid identity claims in the claim registry.

- Investor qualification: Receiver meets the requirements for the token (accredited investor, qualified purchaser, jurisdiction-eligible).

- Transfer limits: Daily/monthly volume limits per investor, maximum holder count, concentration limits.

- Lockup enforcement: Time-based transfer restrictions for primary issuance participants.

- Regulatory holds: Admin-imposed holds on specific addresses (freeze orders from competent authorities).

Each rule is implemented as an independent module that can be added, removed, or updated without redeploying the token contract. The token contract calls a central ComplianceRegistry that iterates through active modules and returns a pass/fail result.

This modularity is not just good engineering – it is a MiCA requirement in practice, since regulatory rules evolve and your smart contracts must adapt without disrupting existing token holders. See our MiCA compliance checklist for the specific rules that must be encoded.

Upgradeability strategy

Tokenization platforms need upgradeable contracts. Regulatory requirements change, compliance rules evolve, and bugs need to be fixed without migrating token holders to new contracts. The three production-proven patterns:

Transparent proxy (ERC-1967): The most widely used pattern. A proxy contract delegates all calls to an implementation contract. Upgrading means pointing the proxy to a new implementation. Storage layout must be carefully managed across upgrades. Well-understood, well-audited, supported by OpenZeppelin.

UUPS (Universal Upgradeable Proxy Standard): Similar to transparent proxy, but the upgrade logic lives in the implementation contract rather than the proxy. Slightly more gas-efficient, and the upgrade function can be protected with custom access control logic. Our preferred pattern for new deployments.

Diamond pattern (ERC-2535): For complex platforms with many functions, the diamond pattern allows splitting contract logic across multiple facets (modules) that share a single storage context. Useful when your token contract needs to support dozens of functions across compliance, governance, and lifecycle management. Trade-off: significantly more complex to implement, test, and audit.

For most tokenization platforms, UUPS provides the right balance of upgradeability, gas efficiency, and auditability.

3. Identity & Compliance Middleware

This subsystem mediates between the off-chain world of identity verification and the on-chain world of token transfers. It is the most integration-heavy subsystem and the one most likely to cause delays if underestimated.

Identity architecture

The core pattern is a two-layer identity system:

Off-chain identity store: Your KYC/KYB provider (Sumsub, Onfido, Jumio, or custom) verifies investor identity and stores PII in a secure, GDPR-compliant database. This data never goes on-chain.

On-chain claim registry: When an investor passes KYC, your middleware issues an on-chain identity claim – a cryptographic attestation that the address holder has been verified, without revealing any PII. The claim includes: claim type (KYC passed, accredited investor, jurisdiction), issuer (your platform’s claim issuer address), expiry date, and a signature.

Token transfer logic checks the on-chain claim registry, not the off-chain database. This separation is critical: it keeps PII off-chain while enabling permissionless compliance verification on-chain.

If using ERC-3643, the ONCHAINID framework provides this infrastructure out of the box. For other standards, you will need to build or integrate a claim registry, which is a relatively straightforward contract but requires careful key management for the claim issuer.

Investor qualification Eegine

Beyond basic KYC, institutional-grade platforms need to verify investor qualifications:

- Accreditation status: Qualified investor under MiFID II, accredited investor under Reg D (if serving US investors), professional client classification.

- Jurisdiction eligibility: The investor’s jurisdiction must be compatible with the token’s offering rules. Some tokens cannot be sold to US persons; others cannot be sold outside the EU.

- Investment limits: Retail investors may have per-token or aggregate investment caps under certain exemptions.

This qualification logic can live off-chain (simpler) or on-chain (more transparent, auditable). The hybrid approach is most practical: perform qualification checks off-chain, then encode the result as on-chain claims that the compliance modules verify during transfers.

Travel Rule integration

Under MiCA and TFR, every crypto-asset transfer between regulated entities must include originator and beneficiary information. Your platform needs to integrate with a Travel Rule protocol:

- TRISA (Travel Rule Information Sharing Architecture) — open protocol, growing adoption.

- Notabene – commercial Travel Rule solution with broad VASP coverage.

- Shyft/Veriscope – blockchain-native Travel Rule compliance.

The integration point is your transaction processing pipeline: before executing a transfer to an external address, query the Travel Rule network to identify the counterparty VASP and exchange required information.

4. Distribution & trading infrastructure

Tokenized assets need to be distributed to investors (primary market) and, optionally, traded between investors (secondary market). The architecture differs significantly between these two flows.

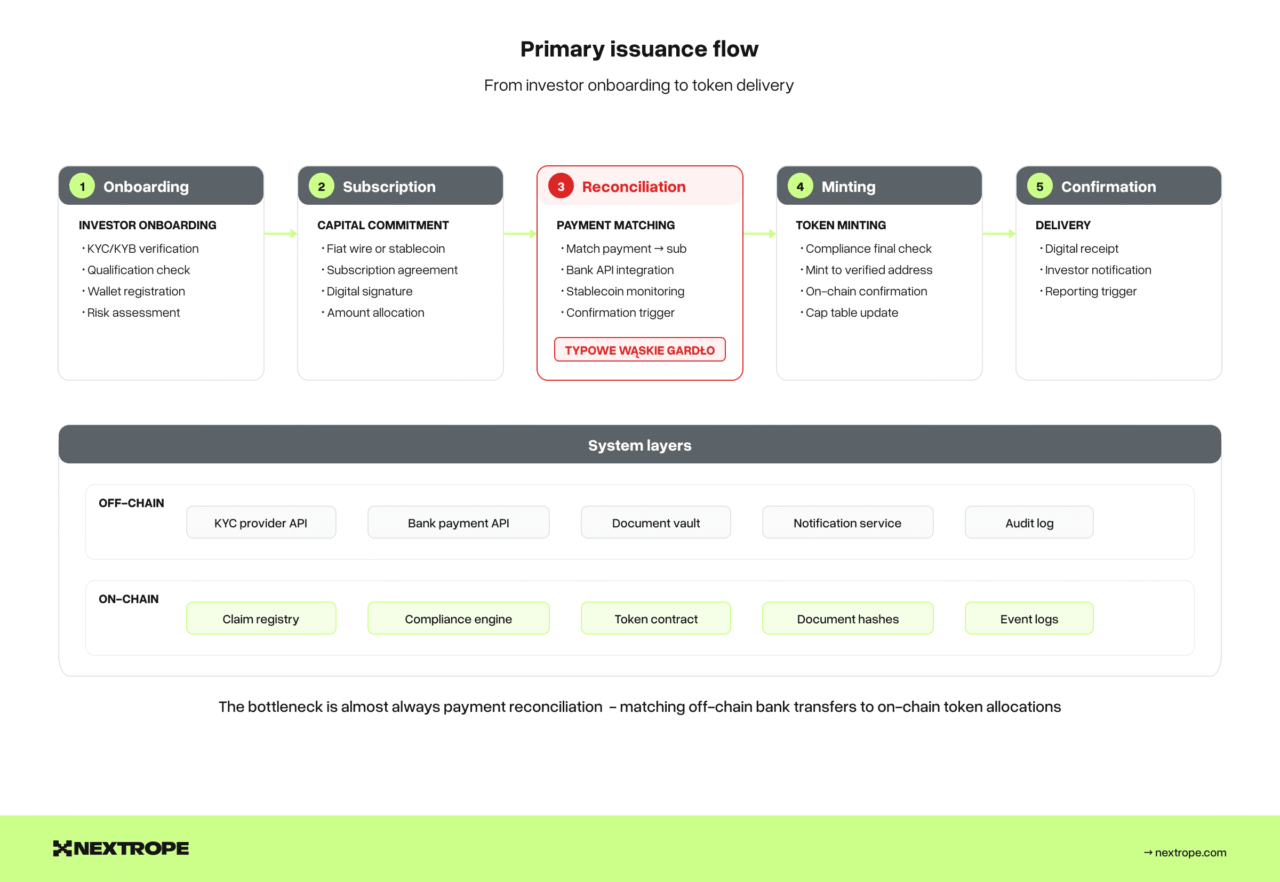

Primary issuance flow

The primary issuance pipeline handles the initial sale of tokens from issuer to investor:

- Investor onboarding: KYC/KYB verification, qualification check, wallet registration.

- Subscription: Investor commits capital (fiat wire or stablecoin transfer) and signs subscription agreement.

- Payment reconciliation: Match incoming payments to subscription commitments. For fiat, this requires bank API integration or manual reconciliation. For stablecoins, on-chain event monitoring.

- Token minting: Once payment is confirmed and all compliance checks pass, mint tokens to the investor’s verified address.

- Confirmation: Issue a digital receipt, update the cap table, and trigger any post-issuance reporting.

This flow is inherently sequential and cannot be fully automated without a payment integration layer. The bottleneck is almost always payment reconciliation, matching off-chain bank transfers to on-chain token allocations.

Secondary market architecture

For secondary trading, you have three architectural options:

Integrated order book: Build a matching engine into your platform. This makes you a trading venue under MiCA (CASP authorization required), with full order book record-keeping in ESMA JSON format, pre/post-trade transparency requirements, and market abuse monitoring. Complex and heavily regulated, but provides full control.

DEX integration: List tokens on a permissioned DEX (Uniswap with whitelisted pools, or a dedicated permissioned AMM). Liquidity is handled by the AMM; your platform handles compliance pre-qualification (only whitelisted addresses can interact with the pool). Less regulatory burden on your platform, but you depend on external infrastructure.

OTC bulletin board: Provide a matching service where buyers and sellers express interest, then settle bilaterally. Lightest regulatory touch but poorest liquidity experience.

The choice depends on your regulatory appetite and liquidity needs. Most institutional platforms start with OTC and evolve toward integrated order books as volume justifies the compliance investment.

Settlement

Settlement in tokenized markets can be atomic (delivery-versus-payment in a single transaction) or staged. Atomic settlement using smart contracts is the tokenization gold standard. The token transfer and payment transfer happen in the same transaction, eliminating settlement risk. This requires:

- Payment in on-chain currency (stablecoin or tokenized deposit).

- A settlement contract that escrows both assets and executes the swap atomically.

- Integration with the compliance layer to verify both parties before settlement.

For fiat-settled trades, atomic on-chain settlement is not possible. You need a two-phase approach: lock tokens on-chain, confirm fiat payment off-chain, then release tokens. This introduces settlement risk that must be managed through escrow and timeout mechanisms.

5. Custody & key management

Private key management is the security foundation of your platform. A single compromised key can drain every token on the platform. This subsystem is non-negotiable for institutional credibility.

Key architecture

Production platforms need three categories of keys with different security levels:

Platform operational keys – used for routine operations: minting tokens, publishing oracle updates, issuing identity claims. These should be managed by an HSM (Hardware Security Module) or a cloud KMS (AWS KMS, Azure Key Vault, GCP Cloud KMS) with strict IAM policies. Multi-party approval for sensitive operations (minting above a threshold, pausing contracts).

Investor custody keys – keys controlling investor token holdings. Three models exist: self-custody (investor manages their own keys), omnibus custody (platform or third-party custodian holds keys on behalf of investors), or hybrid (investors hold keys with platform as recovery agent). MiCA-regulated platforms using omnibus custody must integrate with an authorized custodian and implement segregation of client assets.

Admin/governance keys – keys controlling contract upgrades, compliance rule changes, and emergency operations (pause, freeze). These MUST use multi-sig (Gnosis Safe or equivalent) with time-lock delays for non-emergency operations. A single admin key is an existential security risk.

MPC vs Multi-sig

For institutional custody, the two dominant approaches are:

Multi-party computation (MPC): The private key is never assembled in a single location. Key shares are distributed across multiple parties, and signatures are computed collaboratively without any party seeing the full key. Fireblocks, Fordefi, and similar institutional custody providers use this model. Advantages: no single point of compromise, key shares can be distributed geographically and organizationally. Disadvantages: vendor dependency, limited smart contract interaction patterns, recovery complexity.

Multi-signature (Multi-sig): Multiple independent keys must sign a transaction for it to be valid. Gnosis Safe (now Safe) is the most widely deployed multi-sig for EVM chains. Advantages: fully on-chain, transparent governance, well-audited, no vendor lock-in. Disadvantages: each signer must manage their own key securely, on-chain footprint (gas costs), less flexible threshold schemes than MPC.

For platform operational keys, multi-sig with a 2-of-3 or 3-of-5 scheme provides good security with operational practicality. For high-value custody (investor assets), MPC through an institutional custody provider is increasingly the expected standard.

6. Reporting & regulatory interface

The subsystem most teams build last – and most regulators ask about first. Under MiCA, reporting is not optional and has specific technical format requirements.

White paper lifecycle management

MiCA requires crypto-asset white papers in iXBRL format. Your platform needs a pipeline that:

- Takes structured asset and token data from subsystems 1 and 2.

- Maps it to ESMA’s XBRL taxonomy.

- Generates validated iXBRL output.

- Manages versioning – material changes require updated publication and NCA notification.

- Feeds into ESMA’s public register via the competent authority.

If your platform serves multiple issuers, this becomes a self-service white paper generation tool – a meaningful product feature.

Audit trail

Every state change across every subsystem must be logged with sufficient detail for regulatory examination:

- Who initiated the action (authenticated user/address).

- What changed (before and after states).

- When it happened (timestamp with timezone).

- Why it was authorized (compliance check results, approval records).

This is not just application logging – it is a structured audit trail that can be exported in formats regulators expect. DORA’s operational resilience requirements make this mandatory for MiCA-regulated entities.

Regulatory reporting pipeline

For platforms operating trading venues, ESMA mandates order book records in standardized JSON format. Your trading subsystem must log all orders and trades in this schema in real-time – not reconstructed after the fact.

For ART/EMT issuers, periodic disclosure reports (reserve composition, redemption statistics, audit results) must be generated and published on schedule. Automate this pipeline from the start; manual report generation does not scale and introduces error risk.

Integration architecture: How the subsystems connect

The six subsystems communicate through three integration patterns:

Event-driven architecture

On-chain events (token transfers, minting, compliance check results) are emitted as blockchain events and consumed by off-chain services via event listeners. This decouples on-chain and off-chain logic and allows asynchronous processing. Use a reliable event indexer (The Graph, custom indexer, or a blockchain node with event filtering) to avoid missed events.

API gateway

Off-chain subsystems (identity, valuation, custody) expose functionality through a central API gateway. The gateway handles authentication, rate limiting, and routing. All subsystem-to-subsystem communication passes through the gateway, providing a single point for logging, monitoring, and access control.

Shared state layer

Some data must be consistent across multiple subsystems: investor status (identity + qualification + wallet mapping), asset state (lifecycle stage + valuation + token supply), and compliance rules (active modules + parameters). A shared state layer, typically a database with event sourcing, ensures all subsystems work from consistent data.

The anti-pattern to avoid: direct subsystem-to-subsystem coupling. If your issuance engine directly calls your KYC provider’s API, and your trading engine also directly calls it, you have duplicated integration logic, inconsistent error handling, and no central audit trail. Route everything through the middleware layer.

Architecture decision record: Key tradeoffs

Every platform makes these decisions. Here is how we think about them:

Public chain vs private chain: Public chains (Ethereum, Polygon, Base) provide maximum composability, liquidity access, and credibility. Private chains (Hyperledger, Corda) provide privacy and control but fragment liquidity. For institutional tokenization targeting secondary market liquidity, public chains with permissioned smart contracts (the ERC-3643 model) are increasingly the default. Private chains are appropriate for specific use cases like interbank settlement or consortium-specific instruments.

Monolith vs microservices: For early-stage platforms (single asset class, single jurisdiction), a modular monolith is faster to build and easier to operate. Decompose into microservices when you have clear scaling bottlenecks or need independent deployment of subsystems (e.g., trading engine scaling independently from identity verification).

Single-chain vs multi-chain: Start with one chain. Multi-chain support is architecturally expensive and rarely justified until you have product-market fit. When you do add chains, abstract chain-specific logic behind a chain adapter layer so your business logic remains chain-agnostic.

Build vs integrate per subsystem: Build where you differentiate (asset onboarding logic, compliance rules specific to your asset class). Integrate where commoditized (KYC providers, custody, oracle infrastructure). Never build your own custody from scratch unless you are a licensed custodian.

From architecture to production

Architecture diagrams are necessary but not sufficient. The gap between architecture and production is bridged by:

Testing at the integration boundary. Unit tests for smart contracts are table stakes. What breaks in production is the integration between subsystems: the event listener that misses a block reorganization, the KYC callback that times out during primary issuance, the oracle update that publishes a stale price. Test these boundaries explicitly.

Monitoring the off-chain/on-chain seam. Your platform has one foot on-chain and one foot off-chain. Monitor both: on-chain transaction confirmation times, gas prices, contract event emissions. Off-chain: API response times, KYC provider availability, payment reconciliation latency. The seam between them is where incidents happen.

Operational runbooks for regulated operations. When a regulator orders an asset freeze, how quickly can your team execute it? When an oracle publishes an incorrect valuation, what is the rollback procedure? These are not edge cases in regulated infrastructure — they are operational scenarios you must plan for.

Nextrope designs and builds tokenization platform architecture for financial institutions across Europe. Our engineering team has delivered production platforms handling regulated securities, real estate fractions, and DeFi lending instruments — including systems for Alior Bank and SOIL. If you are evaluating architecture decisions for your tokenization platform, let’s talk.