What you get

Backend and indexing infrastructure turns blockchain events into operational reality. We deliver production-grade systems that handle data reliably at scale.

This is the layer most teams underestimate and where production systems often break.

Backend services & APIs

+Build service layers that orchestrate workflows and integrate with your stack. Output: APIs, service boundaries, and operational tooling.

Custom indexing

+Index smart contract and XRPL activity into queryable datasets. Output: indexers tailored to your schema, use cases, and reporting needs.

Event-driven pipelines

+Transform, enrich, and route events across systems reliably. Output: pipelines with retries, idempotency, and clear failure handling.

Data integrity & consistency checks

+Detect gaps, duplicates, and mismatches across systems. Output: consistency checks and alerting around critical business invariants.

Reporting outputs

+Make data usable for ops, finance, and product teams. Output: exports, dashboards-ready tables, and audit-friendly outputs.

Observability & production hardening

+Make systems measurable and debuggable under load. Output: metrics, logs, alerts, and runbooks for live operations.

Core architecture

Event ingestion

+Chain listeners and XRPL ingestion.

Normalization layer

+Schema and business event modeling.

Processing layer

+Transformations, enrichment, retries, idempotency.

Storage layer

+Queryable datasets designed for reporting.

Serving layer

+APIs, exports, and downstream integrations.

Ops layer

+Monitoring, alerts, and incident runbooks.

Common use cases

Backend and indexing scenarios where production reliability is non-negotiable.

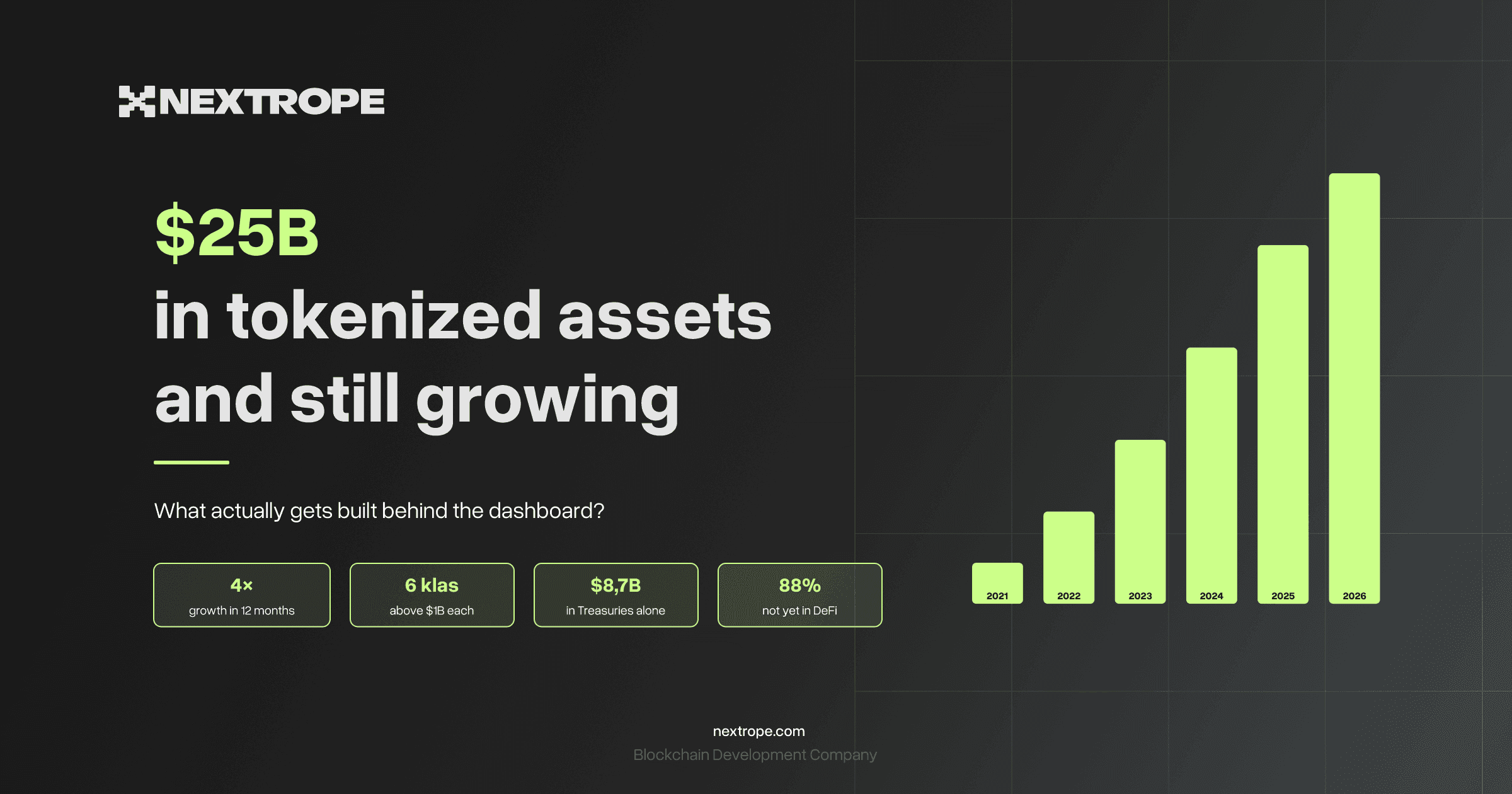

Tokenization operations

+Operational datasets for issuance, lifecycle events, and platform reporting.

Stablecoin payments & settlement

+Stateful payment flows with traceable operations and reporting outputs.

DeFi protocol operations

+Indexing and monitoring for protocol activity, risk events, and operational workflows.

Cross-system synchronization

+Keep on-chain state and off-chain records consistent across services and providers.

Analytics-ready foundations

+Clean datasets and event models that power product analytics and internal tools.

How we work

Related Case Studies

Backend, Indexing & Data Pipelines - Frequently Asked Questions

- Why is custom indexing important for blockchain systems?

- Public block explorers are designed for human browsing, not operational queries. Custom indexers transform raw chain events into structured datasets optimized for your specific reporting needs, business invariants, and downstream integrations. This is the layer that makes on-chain data usable by finance, ops, and product teams - and it is where most blockchain systems break in production.

- How do you handle blockchain reorgs and missed events?

- We design indexers with explicit reorg handling - tracking confirmation depth, applying rollback logic when forks are detected, and reprocessing affected blocks. For missed events we implement gap detection that compares indexed ranges against chain state and triggers targeted reprocessing. Idempotency is built into the pipeline so reprocessing is always safe.

- Which chains do you support for indexing?

- We build custom indexers for EVM-compatible chains (Ethereum, Polygon, Base, Arbitrum, and others) and XRPL. Each has different ingestion characteristics - EVM uses event logs and call tracing, XRPL has a distinct transaction type model and ledger streaming API. We do not use generic indexing platforms when custom logic is needed for your schema.

- What does an event-driven pipeline mean in practice?

- An event-driven pipeline listens for on-chain events (contract emissions, XRPL transactions), transforms them into normalized business events, enriches them with off-chain context (pricing, identity, metadata), and routes them to downstream systems (databases, message queues, webhooks). It includes retry logic, idempotency guarantees, and alerting on processing failures - so the pipeline is operational, not just functional.