Introduction

The way businesses work is being revolutionized by artificial intelligence (AI) and language models like GPT. AI is quickly becoming a crucial tool for businesses wanting to stay competitive in today's fast-paced economy, from automating monotonous processes to offering insightful analysis and predictions. In this article, we'll look at how companies are using GPT-like models and AI to boost productivity, boost efficiency, and boost revenue. We will look at the numerous uses of AI in the corporate world, from customer service to financial analysis. We'll also look at how GPT-like models are specifically employed in content generation and natural language processing to scale up communication and human-computer interaction.

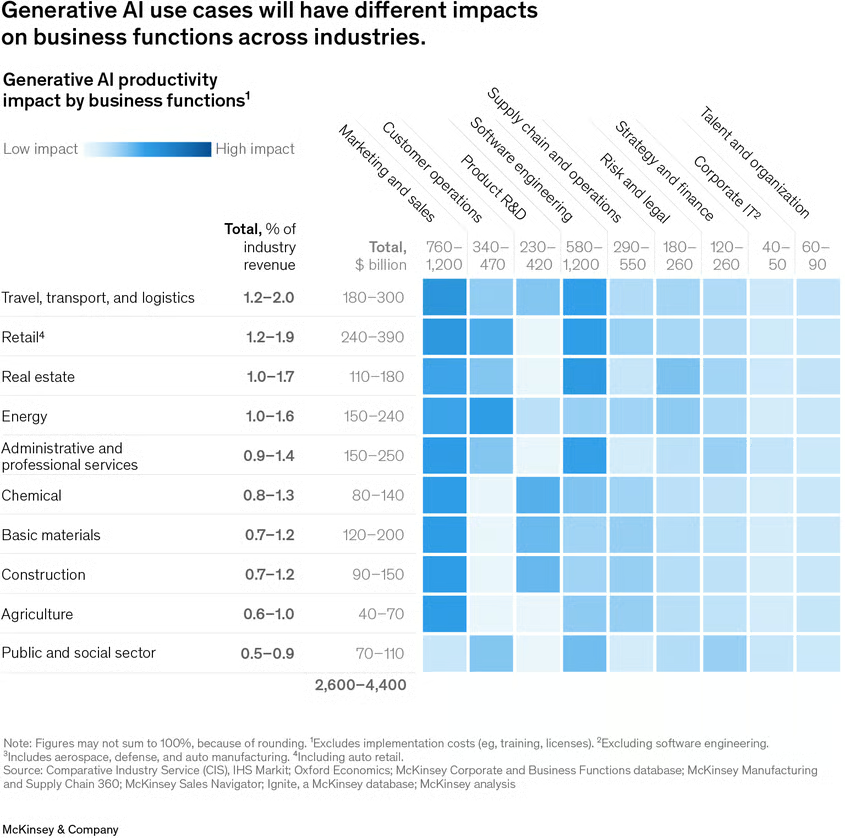

AI implementation by sector

AI and models like GPT can be particularly beneficial in a variety of sectors, including but not limited to:

| Sector | Applications |

| Natural Language Processing (NLP) | Language Translation, Text Summarization, Question Answering |

| Content Creation | Automated written content generation (news articles, product descriptions, social media posts) |

| Business | Automating tasks (customer service, sales, marketing), Financial forecasting and analysis |

| Healthcare | Medical Diagnosis, Drug Discovery, Personalized Medicine |

| Education | Personalized learning experience, Grading and providing feedback |

| Transport and logistics | Self-driving cars, Supply chain management |

| Robotics | Object recognition, Navigation, Manipulation |

| Gaming | Realistic and engaging gameplay, New types of games |

GPT-like Models in NLP and Content Creation: Automating Writing and Personalizing Content

GPT-like models have demonstrated substantial skills in the areas of Natural Language Processing (NLP) and content generation. Language translation, text summarization, and question answering are just a few of the natural language processing activities that are catered to by language models like GPT-3.

Automated writing is one of the most well-liked uses of GPT-like models in NLP and content generation. GPT-3 is capable of producing written content such as blog entries, product descriptions, and social media updates automatically. By automating the process of content production, GPT-3 can save enterprises a significant amount of time and resources because it can produce cohesive and grammatically sound text. This makes GPT-3 perfect for jobs like writing reports, email drafts, and chatbot scripts for customer assistance.

GPT-like models have uses in personalized content creation for clients in addition to automated authoring. By evaluating consumer data and creating content that is specific to the user's interests and preferences, GPT-3, for instance, can be used to provide personalized product suggestions or targeted advertising. This might aid companies in enhancing their marketing initiatives and raising client involvement.

Automation: Using AI to Simplify Business Processes

Automating processes is one of the most obvious ways that organizations are utilizing AI. AI can handle a wide range of monotonous activities, from customer care chatbots to automated financial analysis, freeing up employees to concentrate on more worthwhile work. Simple customer care requests, like responding to frequently asked queries, can be handled by AI-powered chatbots, while more sophisticated systems can even manage complicated problems. Additionally, financial analysis tasks like fraud detection and trend prediction can be automated using machine learning models.

AI-Assist in Healthcare Revolution: Diagnosis and Treatment

AI is being applied in a variety of ways in the healthcare sector to improve efficiency and precision. AI-powered systems, for instance, can help clinicians diagnose illnesses by reviewing medical images and making recommendations. This raises diagnostic precision while lowering the possibility of human error. Drug development is another area where AI is being used in healthcare. AI is capable of analyzing enormous amounts of data, including genetic data, to find potential novel treatments and medications. AI is also being used to develop individualized treatment regimens for patients, which take into consideration aspects like medical history, genetics, and other personal traits.

Intelligent tutoring and Personalized Learning with AI in Education

Similar to how it is being used in business, AI is being used in education to help teachers grade assignments and give feedback to students. Based on a student's skills, shortcomings, and preferred learning style, AI is used to generate customised learning plans for them. Additionally, it contributes to the development of intelligent tutoring programs that support teachers by offering tailored feedback and assistance to students both within and outside of the classroom. AI is also being used to automate grading and assessment, which can assist save teachers time and increase the effectiveness of the educational system.

AI-Optimized Logistics and Transportation: Supply Chain Management to Self-Driving Cars

AI is also being used by the transportation and logistics sector to boost productivity and cut expenses. The development of self-driving automobiles is one example of how AI can be used to enhance road safety and lower the frequency of accidents brought on by human mistake. Another area where AI may be used to optimize is supply chain management. This is done by forecasting demand, analyzing data, and making better decisions. AI can also improve fleet management by tracking the whereabouts and condition of vehicles, anticipating maintenance requirements, and increasing productivity.

Enhancing Capabilities and Real-world Functionality of AI-Powered Robotics

AI is being applied in the field of robotics to enhance the capabilities and usefulness of robots. Robots are now able to recognize and interact with items in the real world thanks to AI, for instance in the field of object recognition. Another area where AI is applied to help robots autonomously navigate in challenging settings is navigation. AI can also be utilized to enhance the manipulation abilities of robots, allowing them to carry out a larger variety of activities, like grabbing and manipulating real-world objects. Robots are improving their ability to work in real-world settings and do tasks that were previously insurmountable thanks to the incorporation of AI.

From Realistic Gameplay to Game Development: AI in the Gaming Industry

AI is also employed in the video game industry to build new game genres and more realistic and captivating gameplay. By offering more lifelike AI-controlled characters and environments, game AI is one application of AI that aims to improve gameplay realism and engagement.

AI can also be applied to the creation of new game mechanisms and game genres, such as games that adjust to the preferences and skill level of the player. AI can speed up the process of finding and fixing flaws in games, giving gamers a better gaming experience. Game testing can also be improved by AI. We may anticipate even more advancements in the application of AI to gaming as it continues to develop, pushing the limits of what is feasible in the gaming sector.

Conclusion

In conclusion, the incorporation of AI and models resembling the GPT into numerous industries and businesses is proving to be quite advantageous. These technologies are transforming how we conduct business, improve medical diagnosis in healthcare, personalize education, optimize logistics and transportation, and even transform the gaming sector. GPT-like models have enormous potential for content creation and natural language processing. Businesses should be aware of the advantages and potential of these technologies. The integration of AI and GPT-like models in several industries has a promising future.

en

en  pl

pl