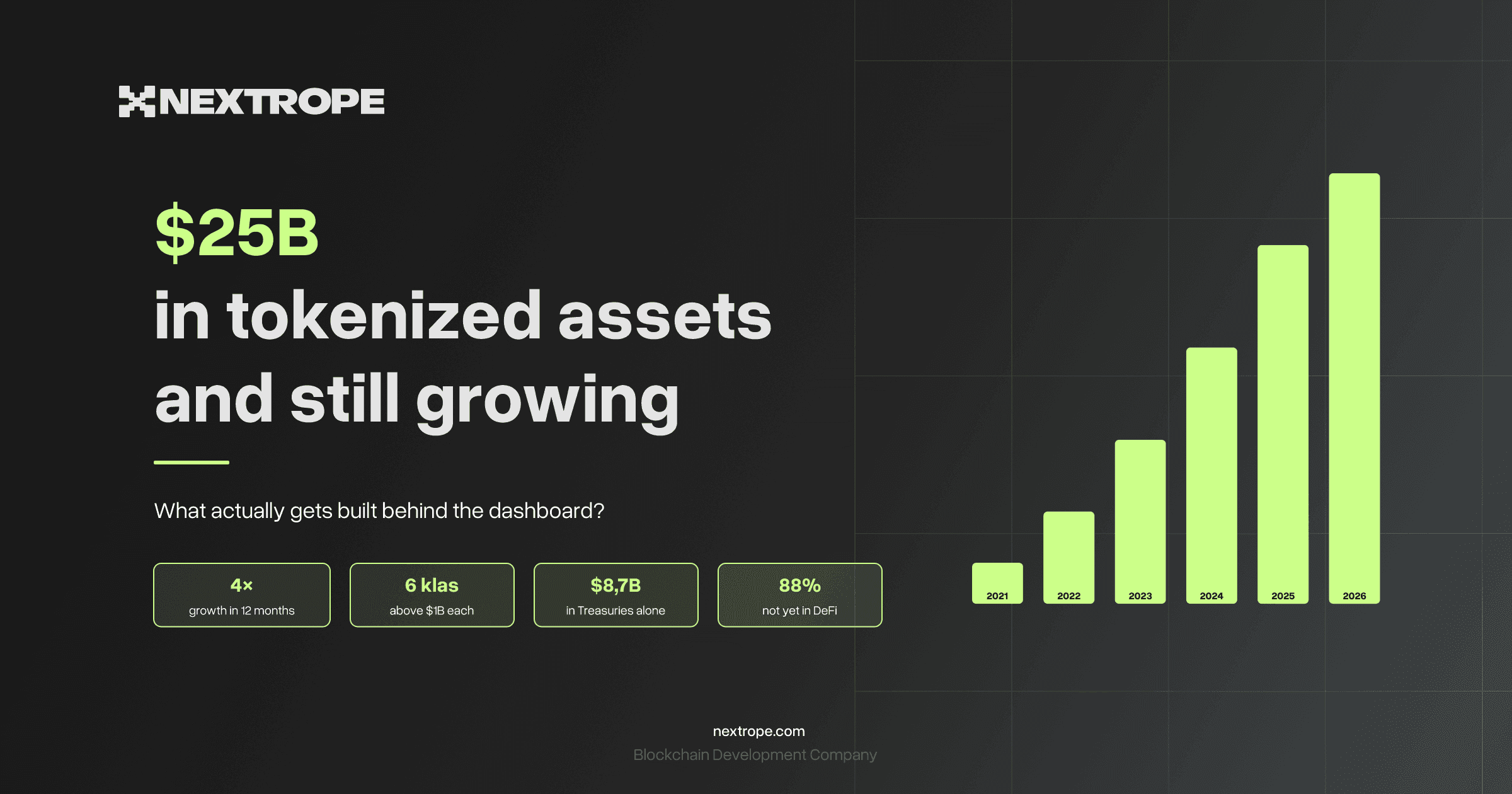

Tokenization Platforms

+Issuance and lifecycle logic for digital and real-world assets built end-to-end. Smart contracts plus backend services and integrations designed for production operations.

Stablecoin Payments & Settlement

+Stablecoin transfers and settlement flows with traceable operations and reporting. Built to connect on-chain movement with off-chain finance processes.

DeFi Lending & Yield Infrastructure

+Lending, yield, and collateral mechanics implemented with production monitoring in mind. We ship the full stack: contracts, backend services, indexing, and operational workflows.

XRPL / Ripple Development

+XRPL payments and asset flows integrated with external systems. Designed for throughput, cost efficiency, and long-term maintainability.

Backend, Indexing & Applied Automation

+The operational layer behind digital asset products. We build APIs, indexers, and data pipelines so systems stay consistent across on-chain and off-chain records, and workflows can be automated safely.

On-chain Production Support

+Launch is only the start. We keep on-chain systems reliable under real operational load. Incident response, monitoring, upgrades, and runbooks designed to protect funds, data integrity, and business continuity.