From the creators' perspective, we steer supply and demand in crypto markets to incentivize (disincentivize) certain behaviors in a way that benefits the project.

Often, a project’s best interest is seen as equivalent to a high token price. For that reason, tokenomics often incentivizes participating in pyramid schemes that give an illusion of growth and value appreciation. Here we explore how to design sustainable tokenomics that will help your project thrive in the long run.

Price Swing Effects

As an entrepreneur, the valuation of your digital asset often determines if you're seen as a visionary or an impostor. Consequently, many teams prioritize strategies aimed at boosting their token's value, frequently through methods like offering exorbitantly high annual percentage yields for token staking. Other tactics include token destruction or repurchase schemes, financed by means other than actual earnings. While these strategies may temporarily elevate excitement and price, they fail to enhance the intrinsic worth of the platform. This leads to significant price instability and diminishes the platform's ability to withstand hostile actions or negative market trends. Paradoxically, the pursuit of elevated prices typically backfires. Instead, the focus should be on reducing price volatility, which supports steady and long-term development.

Price per Token

The Initial price of a token unit should reflect the utility it provides. That price depends on the total value of the project divided by quantity of tokens in circulation. Theoretically, the nominal value of tokens shouldn’t matter. 100$ worth of tokens corresponds to the same share in market cap, regardless of whether we have 100 tokens worth 1$ each, or 1 token worth 100$. But just like in traditional markets - human psychology plays a big role. Market participants show a preference for tokens priced between 10$ and 100$. Such tokens statistically perform slightly better on the market. For this reason, we suggest choosing a supply quantity, that will cause the price per token to oscillate in the 10$-100$ range.

On the opposite end - tokens with prices below 0.01 are shown to underperform and be more volatile.

Supply

Supply-side of tokenomics relates to all the mechanisms that affect the number of tokens in circulation and its allocation structure.

While supply is important for tokenomics design it’s not as significant as people think. In 99% cases, project’s value relies mostly on demand. This means product adoption by users and the ability to generate and capture value.

Initial and maximum supply

How many tokens do we want to initially distribute, and what’s the maximum number of tokens? This relates to the maximum inflation rate - the total dilution of tokens' value over the lifespan of a project. The maximum inflation rate can be calculated through dividing maximum supply by initial supply.

It doesn’t matter if the circulating supply makes 20% or 80% of the maximum supply. In fact, you can be successful even without a capped maximum supply. Many of the 100 projects with the largest capitalization have no capped supply, with Ethereum being the prime example.

Interestingly supply increases don’t matter that much in the short term. On a month-month basis correlation between token emissions rate and price is less than 5%. For that reason, you shouldn’t worry too much about the dilution of value. As long as the annualized inflation rate is below 100% your project will be stable.

Allocation:

A typical allocation structure that’s often considered to be industry’s best practice is oscillating in the following ranges:

- Team: 10% - 20%

- Venture Capital: 10% - 20%

- Advisors: 3% - 5%

- Treasury: 15% - 30%

- Protocol emissions (e.g. staking reward): 30% - 50%

- Airdrops (optional): 3% - 7%

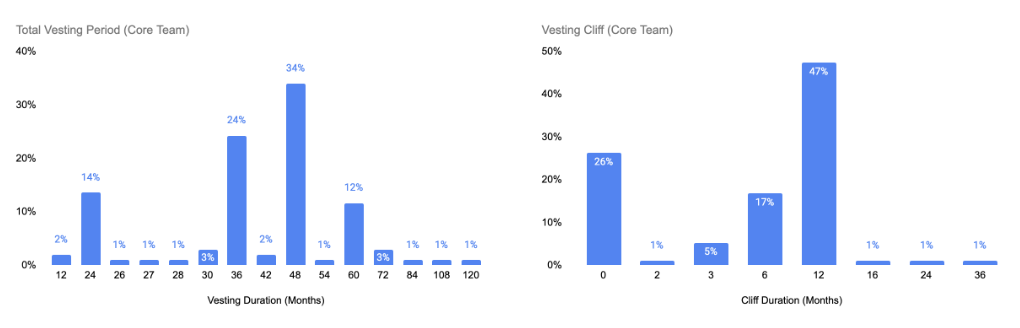

Vesting

Vesting relates to the process of locking a portion of tokens for a chosen amount of time and gradually releasing them. It’s a concept taken from the world of startups. Traditionally these companies would vest equity allocated to founders so that they can’t abandon the project early. That’s because if these entrepreneurs would be able to sell their equity in the early stages then they might lose motivation to keep working on the project. In DeFi, on top of aligning incentives, vesting reduces volatility and big price dumps in the early stages.

Vesting usually applies to institutional investors, advisors, and founders. Industry standard is setting its length between 2 and 5 years.

Demand

Demand-side concerns people’s subjective willingness to buy the tokens. Reasons can be different. It may be due to the utility of your tokens, speculation, or economic incentives provided by your protocol. Sometimes people act irrationally, so token demand has to be considered in the context of behavioral economics.

Utility

Your product should provide real value to the customer, and be able to capture some of it. If the price of your token increases for any reason not related to its utility, then it’s due to speculation on utility in the future.

Expected Utility

If you’re looking to fund your project before developing an MVP then you base on investors’ trust in your ability to deliver utility in the future. A key way to increase this trust, and be more successful with an ICO, is through having a strong founding team, and an innovative idea. You should show people, that you’re likely to deliver something that will have a lot of value to a lot of users.

Hype

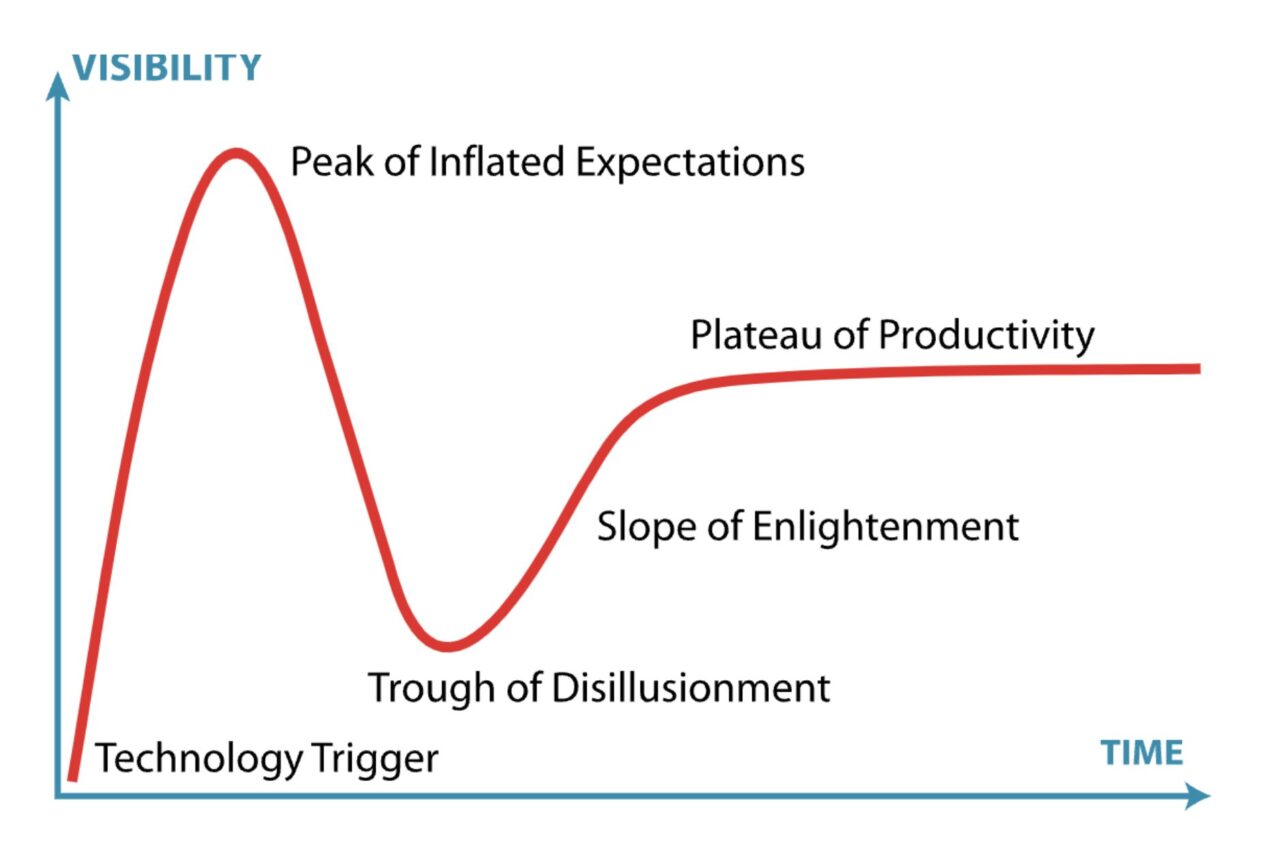

There are also cases when demand comes from pure hype. While this euphoria may be pleasant in the short-term, it's worth remembering that in the long term, a crash will follow.

Conclusion

Supply and Demand are key concepts in the crypto space just like in real economy. Though the equilibrium is after all set by the market forces, we can influence it by various adaptive mechanisms. It’s key to remember, they can only work if your product provides actual value to customers. That’s because customer-driven demand is the only sustainable way of increasing project’s value.

If you're looking to design a sustainable tokenomics model for your DeFi project, please reach out to contact@nextrope.com. Our team is ready to help you create a tokenomics structure that aligns with your project's long-term growth and market resilience.

FAQ

How to know what portion of demand can be attributed to speculation?

- Fear and Greed Index is often used to measure market sentiments in that regard.

Can supply and demand mechanisms be manipulated in crypto markets?

- Yes, it’s not uncommon for big investors to engage in speculative attacks.

How does supply affect the tokenomics of a project?

- There are many ways in which supply affects tokenomics. Key things to consider are emissions rate and allocation.

en

en  pl

pl